Meta's independent Oversight Board is addressing permanent account bans for the first time in its five-year history, taking on a landmark case that scrutinizes the tech giant's power to disable user accounts. This move marks a significant step in examining the severe impact of permanent bans on users, creators, and businesses, who are locked out of their profiles, memories, and connections.

The specific case under review involves a high-profile Instagram user who was permanently banned despite not accumulating enough strikes for an automatic disablement. This user repeatedly violated Meta's Community Standards by posting visual threats against a female journalist, anti-gay slurs targeting politicians, content depicting a sex act, and allegations of misconduct against minorities. The Board's eventual recommendations, while not naming the individual, could significantly influence how Meta handles similar cases involving public figures and ensures transparent explanations for account enforcement decisions.

Meta itself referred this complex case to the Board, seeking guidance on several critical issues. The company is particularly interested in how permanent bans can be processed fairly, the efficacy of its existing tools to safeguard public figures and journalists from sustained abuse, the difficulties in identifying off-platform content that violates policies, the overall effectiveness of punitive measures in shaping online behavior, and best practices for transparently reporting account enforcement decisions.

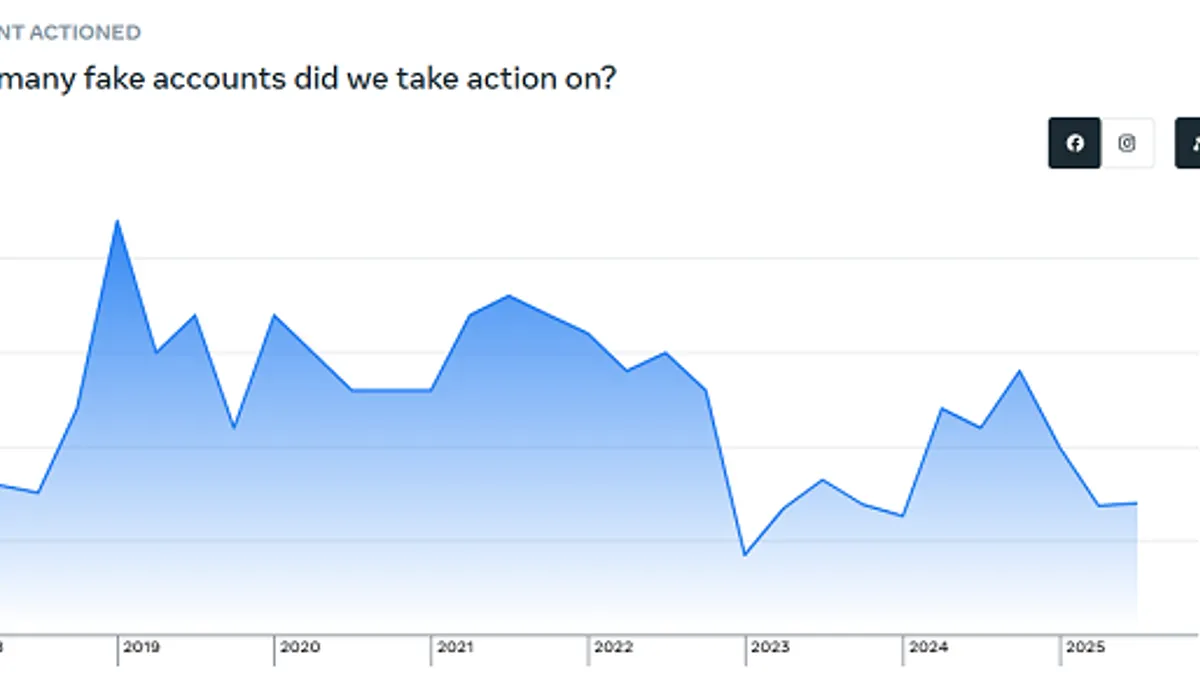

This review by the Oversight Board follows a year marked by widespread user complaints regarding mass bans, often issued with minimal explanation. Both Facebook Groups and individual account holders have reported issues, frequently attributing them to flawed automated moderation tools. Furthermore, users who paid for Meta Verified support have expressed frustration, finding the service ineffective in resolving their ban-related problems.

The true extent of the Oversight Board's influence over Meta's platform policies remains a subject of ongoing debate. While it serves as a policy advisor and can overturn specific content moderation decisions, its scope for enacting broad, systemic changes is limited. For instance, the Board is not consulted when CEO Mark Zuckerberg makes significant policy shifts, such as last year's relaxation of hate speech restrictions. The Board's process can also be slow, and it reviews only a fraction of the millions of moderation decisions Meta makes daily.

Despite these limitations, Meta has shown a degree of responsiveness to the Board's recommendations. A December report indicated that Meta has implemented 75% of over 300 recommendations issued by the Board, consistently adhering to its content moderation rulings. Meta also recently sought the Board's input on its rollout of the crowdsourced fact-checking feature, Community Notes.

Once the Oversight Board issues its policy recommendations, Meta will have 60 days to provide a response. The Board is also actively soliciting public comments on this critical topic, though submissions must be non-anonymous.